Data Engine

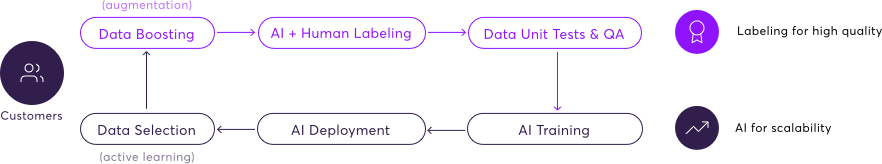

The data engine uses a feedback loop to improve outputs from both human labelers and AI models within the super.AI platform.

The super.AI data engine uses a feedback loop to improve the output from both our human labelers and the models that our meta AI generates.

Enterprise-only AI featuresAs an AI-enabled feature, the data engine is only available to enterprise clients. To learn more, talk to our sales department: [email protected].

There are 6 stages inside the data engine loop:

1. AI and human data labeling

The first and primary stage is to label input data with guaranteed quality, cost, and speed. You can learn more about this stage on our AI compiler page. We begin with just human labelers, but AI is introduced as soon as the second loop of the data engine, following the first run of steps 3 and 4, below.

2. Data unit tests and QA

Super.AI imposes a quality assurance (QA) process across 4 key areas: tasks, labelers, instructions, and data. You can learn about these measures in full on our QA page. If anything is flagged, we generate a unit test to know whether we got it right or not. We can use this information later to improve the AI compiler’s performance on difficult tasks.

3. AI training

We use the outputs created during the labeling process along with the outcomes from the QA stage to begin training AI models that the meta AI module has produced.

4. AI deployment

The models move into the super.AI system and become labeling sources.

5. Data selection

Based on all of the previous steps, super.AI conducts a form of active learning where we select the data that could produce the largest theoretical improvement to the quality, cost, and speed of the AI compiler and the meta AI models.

6. Data boosting

This could also be called data augmentation. In addition to the active learning that takes place in the data selection stage, we actively generate data (both synthetically and on real data) that is similar to data that the AI compiler didn’t meet the quality standard on. In other words, we oversample the places where the AI compiler and meta AI models need the most help. Once we’ve generated this data, we send it into the labeling stage, and the loop starts over.

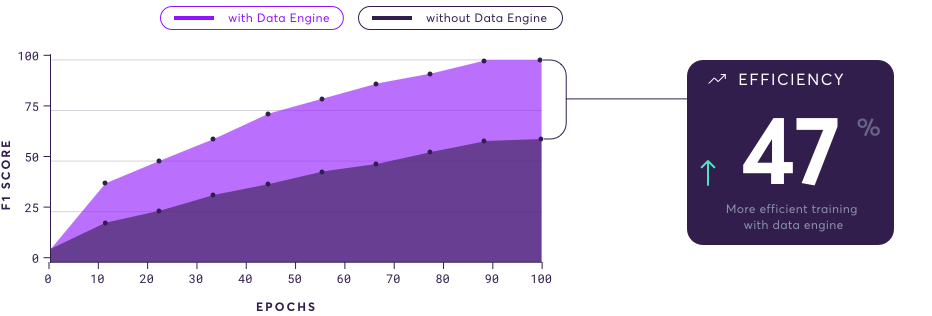

Benefits of the data engine

The super.AI data engine forms an efficient method of using existing data and synthesising new data to improve the weakest parts of the AI compiler and the meta AI models. The result is a higher quality and more efficient overall system provided at lower costs and with improved speeds.

Updated 9 months ago