Data Programming

Learn how data programming helps super.AI platform users produce high-quality, labeled data that is specific to your use case.

Data programming is a powerful and flexible tool that makes it easy to produce high quality labeled data for your specific use case with minimum effort.

Below, we walk you through:

- What makes for good labeled data

- How we can improve quality, cost, and speed

- How data programming functions like an assembly line

- Some real-world examples of where super.AI has helped businesses achieve their goals using data programming

What makes for good labeled data?

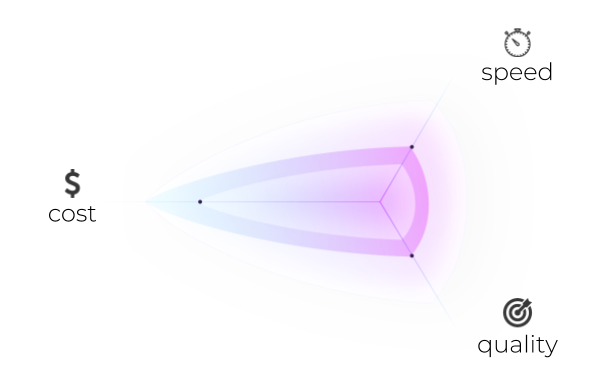

The definition of good labeled data depends upon the project type to which it applies. However, any description is ultimately a tradeoff between the following 3 factors:

- Quality

- Different projects will have different measurements of quality, but often this is precision, recall, or a combination of the two, known as an F1 score. Improving quality can increase the cost and decrease the speed of labeling.

- Cost

- The complexity of labeling tasks varies: sometimes labeled outputs contain multiple labels, and different projects have different demands (e.g., a project might require a quick turnaround on labeling tasks while maintaining a consistently high quality). These and many other factors are used to calculate the cost.

- Speed

- Task complexity impacts speed. Tasks can often be completed faster if needed, although this might result in a drop in quality and/or an increase in cost.

Your project’s requirements determine which of these three are the most important and which can be cut back on. For example, labeled data used in an app for identifying products probably doesn’t need to be as high quality as that used in medical diagnoses, but it probably needs to be faster and cheaper. The relationship between the 3 variables is complex, but super.AI automatically calculates things for you based on your demands.

How can we improve quality, cost, and speed?

All three of these variables are powered by the same thing: labeling sources.

Improving quality means finding better labelers or a higher number of labelers of the same quality. Likewise, any improvement in speed comes from faster labelers or additional labelers for simultaneous labeling. Furthermore, cost is brought down by cheaper labelers.

For our enterprise clients, we can also use AI at times to dramatically drive down costs and improve speed while at the very least maintaining quality levels. We do this through the use of a meta AI, which forms part of the AI compiler.

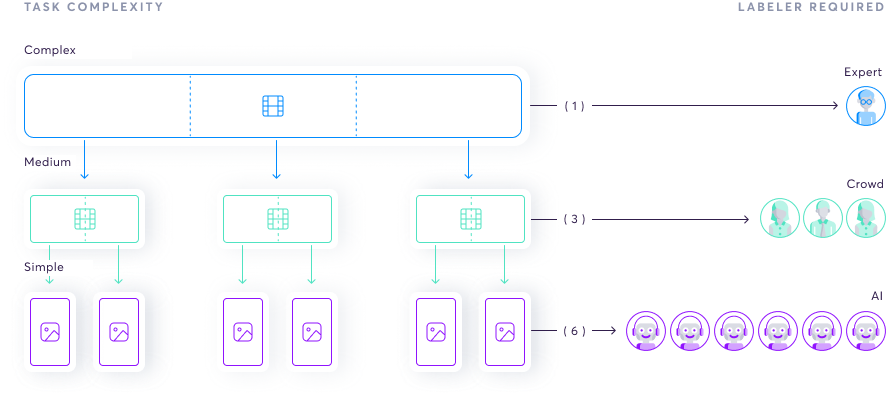

Data programming is like an assembly line

The complexity of many labeling tasks makes risks making them prohibitively expensive and error prone. Our realization was that data programming has to work like the assembly lines that revolutionised the manufacturing industry. This allows us to make data labeling—and therefore AI—affordable and accessible.

We built the super.AI system to break down complex tasks into smaller, simpler tasks. These tasks require less skill to complete, leading to lower costs. As we continue to simplify the tasks, we get to the point where there’s the potential to process the data using AI, further decreasing costs.

The benefits of data programming

- Saves on cost

- Less skill is required, leading to cheaper labour and AI automation

- Increases quality

- Allows for labour specialization, as labelers are focused on specific, context-free tasks

- We can use transfer learning (if you can detect object edges in one scenario, you can do it any scenario)

- Fewer errors, as tasks are simpler

- Scalable

- Simple tasks in isolation make training people easier

- We can begin to automate with AI, which is faster, cheaper, and more scalable

- It is easier to understand where errors occur and correct them, which lowers output variability

Real-world example: meeting summarization

We’ve helped companies to automate key processes and build new products on the back of our data labeling. Here's one to give you a taste of how we can help.

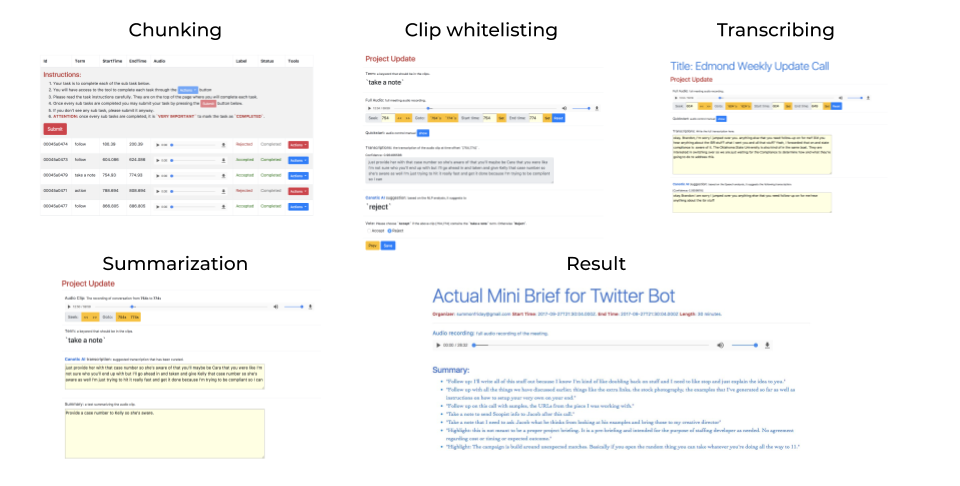

LogMeIn used super.AI to power an AI bot that sits in on meetings and provides a summary that includes action items, takeaways, and overall sentiment.

We took LogMeIn’s raw audio and processed it in the following steps:

- Audio chunking: split the recording up into small chunks, each around 5 seconds in duration

- Whitelisting: is there any meaningful information in the clip? If so, the clip is whitelisted.

- Transcription of whitelisted clips: we add context to the meaningful information (who said it, what is it about, when did they say it, why?)

- Summarization: given the annotated transcription, we produce a summary of the clip

- Chunk amalgamation: we take the resulting collection of summaries and combine them into the final meeting summary

Updated 8 months ago